The rapid integration of Artificial Intelligence (AI) into the enterprise has moved beyond the pilot phase and into the core of product strategy. However, the speed of deployment has often outpaced the development of guardrails.

Organizations now face the dual challenge of governing a non-deterministic technology while ensuring that the human in the loop remains empowered rather than marginalized.

1. AI Governance at the Organizational Level

AI Governance is no longer just a compliance checkbox; it is a fundamental pillar of corporate strategy.

At an organizational level, this involves the creation of a cross-functional AI Ethics Committee comprising legal, engineering, data science, and design leadership.

Effective governance focuses on three primary pillars:

Traceability and Transparency

Organizations must maintain a clear lineage of data used to train models and provide explainability for AI-driven decisions.

Accountability Frameworks

Defining who is responsible when an AI system fails or produces biased outputs. This shifts the focus from the algorithm made a mistake to clear human ownership.

Risk Categorization

Implementing frameworks that categorize AI use cases by risk level (e.g., minimal, high, or unacceptable), similar to the EU AI Act, to determine the level of scrutiny required before deployment.

2. The AI-Era Ethical Design Standards

Traditional design ethics focused on accessibility and dark patterns. In the AI era, these standards must be updated to address the unique behaviors of generative and predictive systems.

From Static to Probabilistic

Designers must now account for hallucinations and varied outputs. Ethical standards must mandate Confidence Indicators, where the UI explicitly informs the user how certain the AI is about a specific result.

Algorithmic Bias Mitigation

Ethical design now requires Red Teaming sessions where designers intentionally try to prompt the system to produce biased or harmful content to build better guardrails.

Consent in the Age of Synthesis

Standards must evolve to handle Data Dignity, ensuring users understand how their inputs contribute to model training and providing clear opt-outs that do not break the core experience.

3. The Blurring Lines: UX, Product, and Engineering

AI is dissolving the traditional silos of the product trio.

The boundary between what a system can do (Engineering) and how it should behave (UX) is thinning.

UX as System Architect

UX designers are increasingly required to understand model latency, token limits, and prompt engineering. The interface is no longer just a series of buttons but a conversation or a latent space.

Design-Led Engineering

As models become more accessible through APIs, the differentiator for products is no longer the underlying LLM, but the Design Layer that contextualizes it. UX designers are now Vibe Coders, shaping the model’s personality and guardrails directly.

The Rise of the AI Interaction Designer

A role that blends linguistic skill, behavioral psychology, and data science to manage the relationship between humans and autonomous agents.

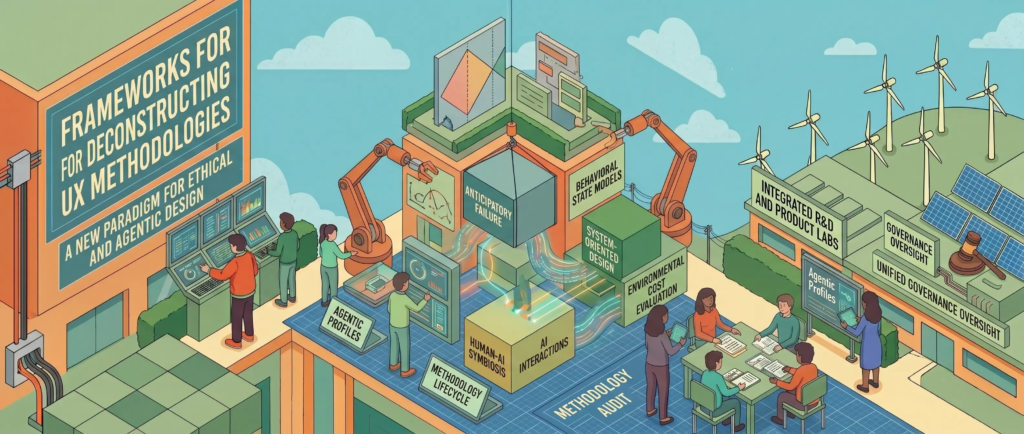

4. Frameworks for Deconstructing UX Methodologies

Standard UX methodologies like the Double Diamond always assumed a linear path toward a solved state. AI requires a move toward Circular and Adaptive Design.

The Black Box Deconstruction

Instead of designing for a happy path, designers should use the Anticipatory Failure Framework. This involves mapping out every way an AI might fail (latency, hallucination, tone-deafness) and designing graceful degradation for each.

Beyond Personas to Agentic Profiles

Traditional personas are static. Designers should adopt Behavioral State Models that map how an AI agent’s personality should shift based on the user’s emotional state or the sensitivity of the task.

De-centering the User

In System-Oriented Design, we recognize that the user is part of an ecosystem. We must evaluate the Environmental and Social Cost of a feature (e.g., the compute power required for a specific AI task) as part of the UX evaluation.

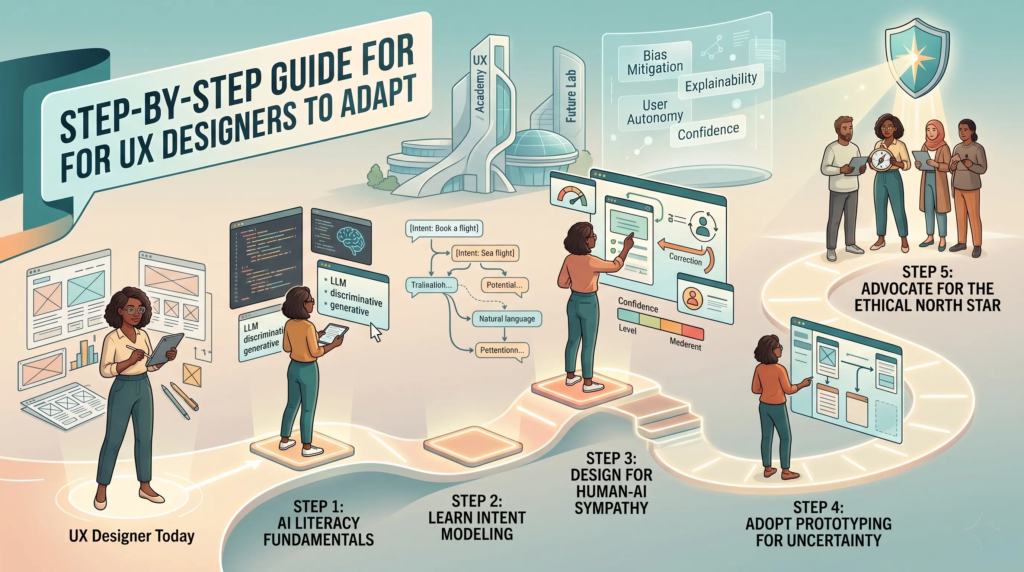

5. Step-by-Step Guide for UX Designers to Adapt

To thrive in this new reality, UX designers must pivot from being ‘screen builders’ to ‘orchestrators of intelligence’.

Step 1: Master the AI Literacy Fundamentals

Understand the difference between discriminative and generative AI.

Learn the basics of how LLMs work, including concepts like temperature, top-p, and context windows.

Step 2: Learn Intent Modeling

Shift focus from clicks and scrolls to user intent.

Practice mapping out natural language prompts and the various ways a user might ask for the same thing.

Step 3: Design for Human-AI Sympathy

Focus on the hand-off.

When does the human take over from the AI?

Build interfaces that allow for easy correction, steering, and verification of AI outputs.

Step 4: Adopt Prototyping for Uncertainty

Move away from static Figma mocks.

Use tools that allow for dynamic data (like Framer or basic Next.js) to test how your designs handle unpredictable AI responses in real-time.

Step 5: Advocate for the Ethical North Star

Become the voice in the room that asks about the long-term impact of an AI feature on human agency.

If the AI does everything for the user, what happens to the user’s skill and autonomy over time?

By shifting focus from the surface-level UI to the deeper logic of the interaction, designers can ensure that the AI era remains human-centric, ethical, and functionally superior.